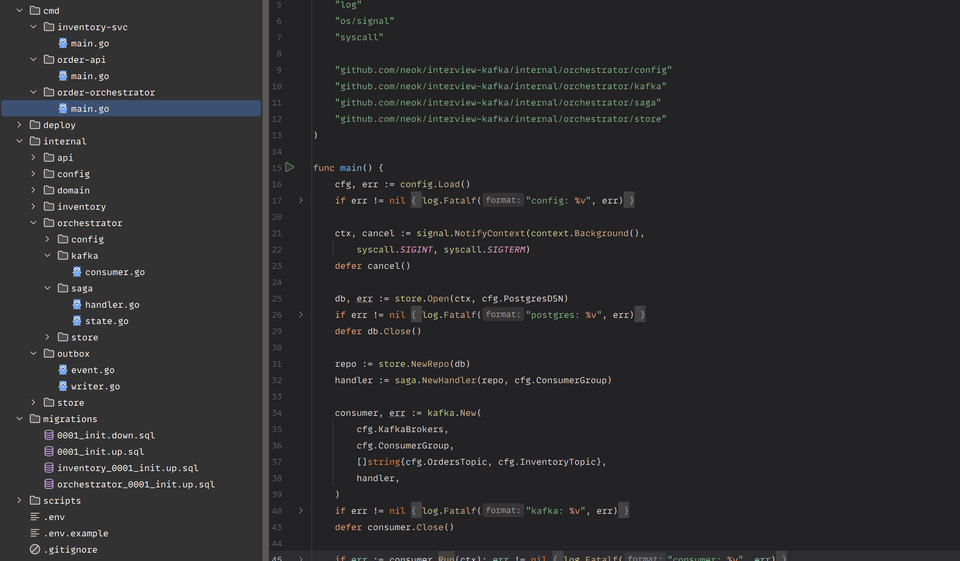

Saga in Go with Kafka, PostgreSQL, and the Outbox Pattern

Outbox, Saga and Kafka in Go

Lately I've been preparing for a system design interview(In today’s market, maintaining your skills isn’t optional), and one of the topics that always comes up is "how do you build a reliable event-driven service". So I built a small project to actually do it, not just talk about it. Order pipeline, three Go services, Postgres, Kafka, Debezium, the whole thing in docker-compose. Took a weekend. This post is what I learned, mostly the patterns that I think are worth knowing if you ever touch Kafka from Go.

The problem:

You write an HTTP handler, it inserts an order into the database, then publishes an event to Kafka. Looks fine in code review. Then production happens.

db.Insert(order)

producer.Send(orderEvent) // network blip → event lostOr the other way around, Kafka publishes, the database rolls back, and now your inventory service is reserving stock for an order that doesn't exist. There is no way to make these two writes atomic without 2 phase commit(2PC), and 2PC is dead in modern stacks. I see this in real codebases all the time. So the first lesson is just, this gap exists and you can't ignore it.

Outbox pattern

The fix is simple. You don't publish from the app at all. You write the event to an outbox table in the same transaction as the business data, and a separate process ships it to Kafka.

func (r *OrderRepo) CreateOrderWithOutbox(ctx context.Context, o *domain.Order) error {

return pgx.BeginFunc(ctx, r.db.Pool, func(tx pgx.Tx) error {

if _, err := tx.Exec(ctx, `INSERT INTO orders ...`, o.ID, ...); err != nil {

return err

}

payload, _ := json.Marshal(envelope(o))

_, err := tx.Exec(ctx, `

INSERT INTO outbox (aggregate_type, aggregate_id, event_type, payload)

VALUES ($1, $2, $3, $4)`,

"order", o.ID, "OrderCreated", payload,

)

return err

})

}Both rows commit, or neither does. The application code never touches Kafka. That's the whole pattern, and it's boring, which is why it works. A bonus that nobody talks about, the outbox table is also a free audit log. You can replay it. You can grep it. You can show it to the support team when they ask "did this event actually fire".

Why Debezium and not a polling worker

You can write a Go cron that does SELECT * FROM outbox WHERE published = false every second and publishes to Kafka. People do this and it kind of works. But you pay for it. You add latency, your event waits for the next tick. You need a published flag and an index, contention with the write path. Two pollers? Now you need locking. Crash recovery? You build it yourself.

Debezium reads the Postgres write-ahead log, the same stream Postgres uses for replication. It's basically free, has built-in offset tracking, survives crashes, and ships with an Outbox Event Router SMT that maps aggregate_id → Kafka key so you get per-aggregate ordering for free.

Saga, but the orchestrated kind

Once events are flowing, the next problem is multi-step workflows. Reserve stock, charge a card, create a shipment, send email. Any step can fail. You need a saga. There are two flavors, choreography (every service listens to every event and figures out what to do) and orchestration (one coordinator decides). I picked orchestration. It's easier to debug, easier to add new steps, and you can actually draw the state machine on a whiteboard in an interview. The orchestrator is a Kafka consumer that owns one tiny table:

CREATE TABLE saga_state (

order_id UUID PRIMARY KEY,

current_step TEXT NOT NULL,

completed_steps JSONB NOT NULL

);That's the entire memory of the saga. No frameworks. No DSLs(Domain-Specific Language). Just a row per order. Each event triggers a transition, and I wrapped the whole thing in a single function so the handlers stay tiny:

repo.Apply(ctx, eventID, group, orderID, func(cur *SagaSnapshot) (*SagaUpdate, error) {

if cur != nil { return nil, nil } // duplicate, skip

return &SagaUpdate{

NextStep: StepAwaitStock,

AppendStep: EventOrderCreated,

Outbox: []OutboxRow{{

AggregateType: "order.commands",

AggregateID: orderID,

EventType: "ReserveStock",

Envelope: buildEnvelope(items),

}},

}, nil

})Inside Apply, one transaction does the dedupe check, loads the saga state, calls your transition function, upserts the new state, writes outbox rows. Commit. Debezium ships it. The orchestrator service has zero Kafka producer code. None. It's a consumer that writes to Postgres. That's it.

Idempotency or you'll lose your weekend

Kafka guarantees at-least-once delivery, which means consumers see duplicates. Rebalances replay uncommitted offsets. Producers retry on transient errors. Consumers crash between the side effect and the offset commit. If your handler is not idempotent, you will silently double-charge a customer or double-reserve stock at 3am. The pattern is one table, same in every service:

CREATE TABLE processed_events (

event_id UUID NOT NULL,

consumer_group TEXT NOT NULL,

processed_at TIMESTAMPTZ NOT NULL DEFAULT now(),

PRIMARY KEY (event_id, consumer_group)

);ct, _ := tx.Exec(ctx, `

INSERT INTO processed_events (event_id, consumer_group)

VALUES ($1, $2) ON CONFLICT DO NOTHING`,

eventID, group)

if ct.RowsAffected() == 0 {

return nil // already processed

}

// real work goes here, in the same transactionInsert into the dedupe table, do the work, commit together. Primary key(PK) conflict means you've seen this event before, skip silently. Combined with at-least-once delivery, you get effectively-exactly-once for state changes. Side effects you can't roll back, like sending an email or charging a card via Stripe, need a different mechanism(Stripe's Idempotency-Key header, S3's deterministic object keys. When that's not available, fall back to your own pending/done table), idempotency keys on the external API. But the pattern above covers 90% of cases.

Per-key ordering, the rule that bites everyone

Kafka only guarantees order within a partition. Across partitions, all bets are off.

So the rule is simple:

- If two events must stay in order, they need the same

partition key. Pick the key based on what actually needs ordering. Fororder.events, I key bycustomer_id. Every event for one customer lands on the same partition and gets processed in order, one after another. - For

inventory.events, I key byorder_id. Ordering is only needed per order, so two orders from the same customer can be processed in parallel. Get this wrong and the bug is brutal. Stock gets released before it's reserved. Payments get refunded before they're charged. You won't see it in tests, you won't see it under low load. You'll find it three weeks later in a postmortem, trying to explain to your manager why a customer was charged twice.

Get it right and it's literally one config field.

What I would tell my past self

A few things to know:

- Outbox before anything else. Don't publish from the app "just for now", that's how you end up with dual-writes that nobody owns.

- Use

github.com/twmb/franz-go. Pure Go, modern, supports the new consumer protocol. Skip the CGO clients (confluent-kafka-go) unless you have a real reason. - One transaction per event. Dedupe row + state change + outbox row, atomically.

- State machines belong in code, not in tables. The

saga_statetable stores the result of decisions. The rules live in version-controlled Go. - Test idempotency by replaying. Reset your consumer group to offset zero, restart, verify nothing changed. If something changed, you have a bug.

Conclusion

After a weekend of building, one thing I didn't expect, all three services ended up looking almost identical. Same outbox table. Same processed_events table. Same thin consumer that decodes a record, calls a function, commits the offset. None of them touch Kafka directly to publish anything.

Outbox + Debezium + a small saga table works well.

Member discussion